Avoiding Pitfalls in Server Load Testing: A Practical Guide from Single Load to Chaos Testing

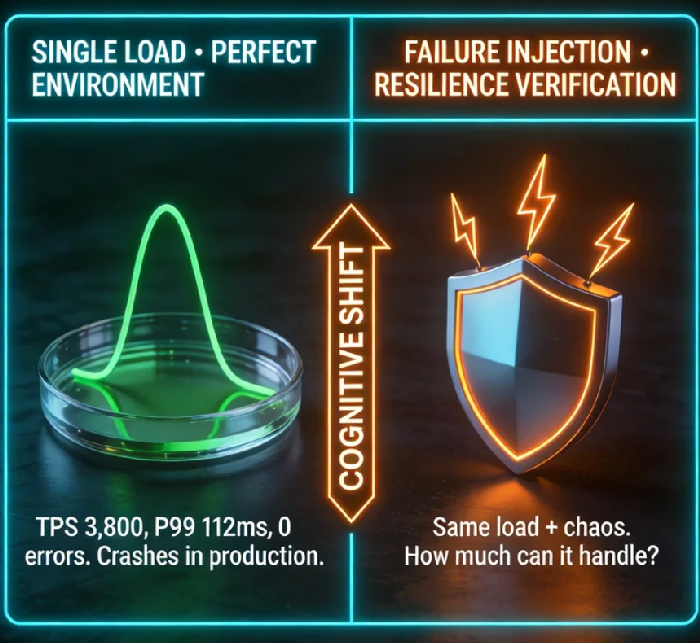

Your load test passed. Then production crashed. Here's why.

The Lie We All Believe

We treat load testing like a final exam. Set up the tool, ramp up users, measure TPS, check the green checkmark, deploy.

This works perfectly—until it doesn't.

The problem isn't your tool. It's your assumptions. You're testing in a sterile lab. Production is a war zone.

Three Pitfalls That Kill Systems

1. You only test the happy path.

Your script simulates perfect users who never refresh, never stall, never exist concurrently in conflict. Real users do all three.

2. You ignore dependencies.

Your API passes with flying colors—when Redis responds in 2ms. What happens at 200ms? What happens when Redis is down? Your load test never asks.

3. You trust autoscaling.

CloudWatch aggregates metrics in 60-second intervals. Instances take 90–120 seconds to join the load balancer. Your traffic doubled in 20 seconds. Do the math. You just lost three minutes of user experience.

Chaos Testing: Stop Proving, Start Discovering

Netflix kills production instances on purpose. Not because they're reckless—because they'd rather find the gap at 2 PM on Tuesday than 8 PM on Saturday.

You don't need Chaos Monkey. You need one hypothesis, one injection, one measurement.

This week, try this:

During your next load test, add 50ms latency to your database port:

bash

tc qdisc add dev eth0 root netem delay 50ms

Watch your P99 response time. Watch your connection pool. Watch your team's face when they realize this single change just doubled latency.

That's not failure. That's discovery.

Four Metrics That Actually Matter

Forget average response time. It lies.

| Metric | What It Reveals |

|---|---|

| P99 latency | Your real user experience |

| CPU %steal | Your noisy neighbor problem |

| Swap (si/so) | You're out of RAM—test invalid |

| Single-core QPS | Your architecture efficiency |

Track these. Everything else is noise.

The Only Question That Matters

Don't ask: "What tool should we use?"

Ask: "What don't I know about my system?"

The answer is always something. A connection pool with no timeout. A cache that expires too aggressively. A query that works at 10k rows and dies at 10M.

Load testing isn't a gate. It's a flashlight. Stop using it to stamp approvals. Start using it to find dark corners.

A colleague once told me: "The most reliable systems I've worked on weren't the ones that passed every test. They were the ones that broke quarterly, added monitoring, added fallbacks—and five years later, couldn't be brought down."

So break yours on purpose. Find the gap. Fix it.

Then break it again.