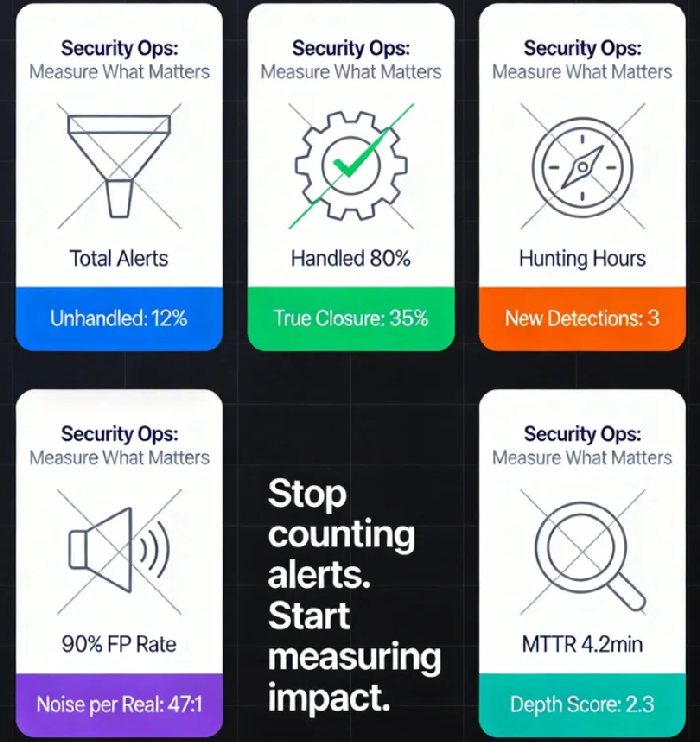

Stop Counting Alerts: Five Counter-Intuitive Metrics That Actually Measure Security Ops Effectiveness

It's Monday morning. The security team gathers around the dashboard, and the leader beams with pride. "Last week was our most productive week ever. We processed 2,847 alerts, with a mean time to respond of just 4.2 minutes. We blocked 312 potential threats."

The room nods approvingly. But in the back row, a bleary-eyed analyst who just finished the night shift whispers to her colleague: "2,400 of those were the same false positive rule. I clicked 'ignore' 2,400 times. And the 'threats' we blocked? Mostly port scans from a misconfigured internal scanner."

This scene plays out in Security Operations Centers (SOCs) every single day. We've become masters of measuring activity while being dangerously blind to effectiveness. We worship at the altar of alert volume, response speed, and ticket counts—metrics that feel objective but are, in fact, deeply misleading.

Here's the uncomfortable truth that the dashboard won't tell you: A high number of alerts is rarely a sign of strong security. It's usually a sign of a broken detection program. And "fast response time" to a false positive isn't efficiency; it's a sophisticated form of organizational self-deception.

The real question isn't "How many alerts did we handle?" It's "How many alerts actually mattered, and what did we learn from them?"

If you're ready to stop counting beans and start measuring what counts, here are five counter-intuitive metrics that will tell you far more about your security operations than your SIEM's default dashboard ever will.

Metric 1: The Unhandled Alert Percentage

The Counter-Intuition: What you don't respond to matters more than what you do.

Most SOCs obsess over "coverage" and "response rates." But the most dangerous alerts are the ones that never get processed at all—the ones dropped due to volume caps, the ones buried in a queue that no one ever reaches, the ones marked "informational" and never reviewed.

Attackers are masters of hiding in these gaps. They know that if they generate just enough noise, some signals will fall through the cracks. They count on your team being too busy with the 2,800 "urgent" alerts to notice the one that actually matters.

How to Measure It:

Calculate the percentage of alerts that are:

Dropped by your SIEM due to rate limiting

Never triaged by a human analyst within 24 hours

Closed without any investigation notes (just "closed by automation" or "mass-closed")

The M-Trends 2023 report found that the average attacker dwell time is 16 days. Those 16 days are filled with alerts—alerts that likely landed in someone's "unhandled" bucket.

The Target: This number should be as close to zero as possible. If it's not, you're not measuring your security posture; you're measuring your capacity to ignore problems.

Metric 2: The Automation True-Closure Rate

The Counter-Intuition: Automation isn't about "handling" alerts; it's about finishing them.

Many teams proudly announce: "We've automated 80% of our alert handling!" But when you dig deeper, you find that "handling" means "created a ticket" or "sent a Slack notification." The alert's lifecycle hasn't ended; it's just been moved to a different queue.

True automation closure means the threat is neutralized, the system is verified clean, and evidence is logged—all without human intervention. Anything less is just re-routing work, not reducing it.

How to Measure It:

Calculate the percentage of automated actions that result in a complete, verifiable closure:

Action executed: IP blocked, host isolated, account disabled

Effectiveness verified: System confirmed the action succeeded

Evidence archived: Full context logged for future reference

No further human investigation required

The Question: If your automation fired but an analyst still had to check the work, did automation really save time?

Metric 3: Threat Hunting Yield Rate

The Counter-Intuition: The most important threats aren't in your alert queue—they're waiting to be found.

Alerts are reactive. They tell you what your existing rules have detected. Threat hunting is proactive: it assumes compromise and actively searches for what your rules missed. A SOC that spends all its time on the alert queue is a SOC that will only find the problems it already knows about.

But "threat hunting" can easily become "random log browsing" without a clear way to measure its value. Enter the Yield Rate.

How to Measure It:

Track the number of meaningful, actionable findings generated per hunting hour (or per hunting campaign). A "meaningful finding" is:

A previously undetected threat actor presence

A new attack technique not covered by current detections

A critical misconfiguration or blind spot in your monitoring

The Rule: If your team hunts for 40 hours and finds nothing, you're either extraordinarily secure or—far more likely—you're not hunting effectively.

Real-World Example: A financial services firm mandated that every security analyst spend 4 hours per week on threat hunting. In six months, they discovered 17 attack patterns their detection rules had missed, including three that had been active for over 30 days.

Metric 4: The Signal-to-Noise Ratio (But Not How You Think)

The Counter-Intuition: It's not the percentage of false positives that matters; it's the absolute volume of them.

We all track "false positive rate"—the percentage of alerts that aren't real. But consider two scenarios:

Scenario A: 100 alerts total, 90 false positives (90% FP rate)

Scenario B: 10,000 alerts total, 9,000 false positives (90% FP rate)

Both have a 90% false positive rate. But in Scenario B, an analyst must wade through 9,000 pieces of garbage to find 1,000 real alerts. That's not a 90% problem; that's a "burying the signal under a mountain of noise" problem.

This is the Signal Submersion Ratio: the absolute multiple of noise you must filter to reach each real alert.

How to Measure It:

Calculate: (Total False Positives / Total True Positives)

If this number exceeds 50 (meaning 50+ false positives for every real threat), your detection rules aren't just noisy—they're actively harming your ability to detect real threats by exhausting your team.

The Insight: A single high-quality detection with 5 false positives a day is infinitely more valuable than 100 low-quality rules generating 500 false positives. The goal isn't coverage; it's clarity.

Metric 5: Investigation Depth Score

The Counter-Intuition: Speed kills understanding. The fastest responders aren't the best responders; they're often the most superficial.

"Mean Time to Respond" (MTTR) is the sacred cow of security metrics. But MTTR measures how quickly you close an alert, not how well you understand it. A fast response to a false positive is meaningless. A fast response to a real threat that only addresses the symptom is dangerous.

What matters is investigation depth—how thoroughly your team understands each significant incident.

How to Measure It:

Create a simple scoring rubric for every investigated alert:

| Score | Criteria |

|---|---|

| 0 | Closed with no comment |

| 1 | Comment present but only repeats alert data ("CPU was high") |

| 2 | Basic investigation documented ("Checked process list, found nothing unusual") |

| 3 | Root cause identified and documented ("Confirmed this is a recurring cron job; not malicious") |

| 4 | Root cause identified plus recommendation for prevention or detection improvement |

Track the average depth score across your team each month. Watch what happens when analysts realize they're being measured not on speed, but on quality of understanding.

The Result: Teams shift from "close it fast" to "understand it deeply." They start writing better documentation, identifying root causes, and—most importantly—preventing the same problems from recurring.

The New Security Operations Dashboard

Imagine a weekly review where, instead of reporting "we handled 3,000 alerts," you report:

Unhandled Alert Percentage: Down to 2% (from 15% last quarter)

Automation True-Closure Rate: 65% (up from 30%)

Threat Hunting Yield: 3 new detection gaps identified

Signal-to-Noise Multiple: 12:1 (down from 47:1)

Average Investigation Depth: 3.2 (up from 1.8)

Which of these two reports tells you more about whether your security is actually improving?

The first report (3,000 alerts) tells you your team is busy. The second tells you they're effective—they're seeing more real threats, missing fewer, understanding them better, and systematically improving the detection pipeline.

The Cultural Shift

Adopting these metrics isn't just a reporting change; it's a cultural transformation. It means:

Rewarding quality over quantity: The analyst who deeply investigates 10 alerts and prevents a recurrence is more valuable than the one who clicks "close" on 200.

Accepting that less noise is a sign of maturity: Reducing alert volume isn't "doing less work"; it's "building a better detection program."

Valuing prevention over reaction: The best security operations team isn't the one that responds fastest; it's the one that makes major incidents increasingly rare.

So the next time someone in a Monday morning meeting proudly announces "we handled 3,000 alerts last week," ask the question that matters:

"How many of them were real? How many were fully understood? And what did we learn from them that will make next week better?"

The numbers on your SIEM dashboard are just data. The real measure of your security operations team is whether, week after week, you're building a system that needs to fight fewer fires because you're systematically eliminating the conditions that start them.

Stop counting alerts. Start measuring what counts. Your team—and your organization's security—will thank you.