Let's start with a number that should make every engineer's stomach drop: 6 hours and 7 minutes. That's how long Cloudflare—one of the world's most sophisticated infrastructure companies—spent in the dark on February 20, 2026, as ~1,100 customer prefixes were systematically withdrawn from its global network .

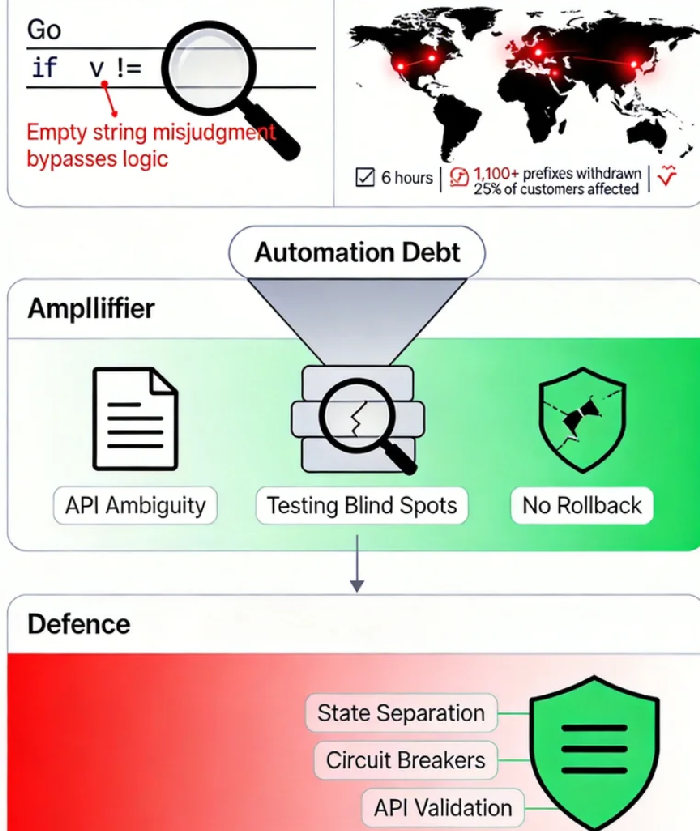

The culprit? A single line of Go code. A routine automation script. A query parameter passed with no value. An if statement that evaluated to false when it should have been true.

This wasn't a sophisticated cyberattack. There was no nation-state actor, no zero-day exploit, no carefully orchestrated breach. The enemy was entirely internal: a cleanup script that, due to a subtle but devastating bug, began methodically deleting customer configurations from Cloudflare's edge .

In the aftermath, Cloudflare engineers worked through the night, manually restoring 300 prefixes that couldn't be recovered through the dashboard because their service bindings had been wiped clean. The website for 1.1.1.1 returned 403 errors. Customers watched their applications vanish from the internet .

This is the hidden cost of automation. And it's a bill that's coming due across the industry.

The Anatomy of a Self-Inflicted Wound

To understand what happened, we need to look at the code. Cloudflare's Addressing API is the source of truth for customer IP addresses on its network. Any change to this dataset propagates instantly to the global edge—a design choice that prioritizes speed but, as we'll see, amplifies risk .

As part of an internal resilience initiative called "Code Orange: Fail Small," Cloudflare engineers set out to automate a previously manual process: cleaning up BYOIP (Bring Your Own IP) prefixes marked for deletion. The goal was noble—replace risky manual procedures with safe, automated workflows.

The implementation took the form of a regularly running background task. Here's the query it made:

go

resp, err := d.doRequest(ctx, http.MethodGet, `/v1/prefixes?pending_delete`, nil)

And here's the relevant part of the API handler:

go

if v := req.URL.Query().Get("pending_delete"); v != "" {

// fetch pending objects from the ip_prefixes_deleted table

prefixes, err := c.RO().IPPrefixes().FetchPrefixesPendingDeletion(ctx)

// ... return those prefixes}Do you see the problem? The client passes pending_delete with no value. In Go's net/url package, Query().Get("key") returns an empty string for keys that exist but have no associated value. So v becomes "", the condition v != "" evaluates to false, and the special case is skipped entirely .

The API server, lacking any indication that this was a special request for pending deletions, interpreted it as a request for all BYOIP prefixes. The cleanup sub-task, blissfully unaware of the misinterpretation, received the complete list and began systematically deleting every prefix it was given—along with all their dependent objects, including service bindings .

The automation intended to make the system safer had become a digital wrecking ball.

Why Testing Didn't Save Them

Every engineer reading this is thinking the same thing: "How did this make it to production?" Cloudflare asked itself the same question.

The answer reveals uncomfortable truths about how even the best teams test.

First, staging environment limitations. Cloudflare's staging environment mirrors production as closely as possible, but "as closely as possible" isn't always close enough. The mock data they relied on to simulate the cleanup process was insufficient to reveal the bug. When the script ran against simplified test data, it behaved exactly as expected .

Second, incomplete test coverage. The team had thoroughly tested the customer-facing API journey. They'd verified that when a customer explicitly requested pending deletions through the proper channel, everything worked. What they hadn't tested was the scenario where an internal background service independently executed changes to user data without explicit, real-time input .

Third, the "works on my machine" fallacy at scale. In isolation, each piece of the system functioned correctly. The API responded to queries according to its specification. The cleanup task iterated over results and performed deletions. The problem was in the interaction—an interaction that only manifested with real-world data patterns and state.

This is the insidious nature of automation debt. It doesn't announce itself. It accumulates in the gaps between components, in the untested pathways, in the assumptions that seemed reasonable at the time.

The Three Faces of Security Debt

The Cloudflare incident is a textbook case of what researchers call "security debt"—the hidden cost of technical shortcuts and fragmented systems . Like financial debt, it accumulates interest. Unlike financial debt, the interest is paid in downtime, breaches, and customer trust.

In this case, we can identify three distinct forms of security debt:

Code Logic Debt. The fatal if statement itself represents a class of errors that are easy to make and hard to catch. Go's Query().Get() returning an empty string for present-but-valueless keys is documented behavior, but it's behavior that can easily be overlooked . The code assumed a certain interpretation of the API contract; the API implemented a different one. This semantic mismatch is a form of technical debt that accrues risk with every line of code written under the same assumption.

API Design Debt. The API endpoint accepted pending_delete as a flag rather than requiring an explicit boolean parameter like ?pending_delete=true. This ambiguity created room for misinterpretation. As the research community has documented, API design choices are a significant source of integration complexity debt . When contracts are fuzzy, implementations become fragile.

Test Coverage Debt. The testing strategy focused on user-initiated paths while neglecting internal automation paths. This is a common blind spot. We test what we expect people to do; we rarely test what our own systems might do to themselves. A framework for quantifying security debt would flag this as a "Compliance Visibility Gap"—a gap between the system we think we have and the system we actually operate .

The Amplifier Effect: When Automation Magnifies Mistakes

Perhaps the most terrifying aspect of this incident is the speed and scale at which it unfolded. Once the cleanup task began executing, changes propagated to Cloudflare's global network in seconds. There was no gradual rollout, no canary deployment, no circuit breaker to detect abnormal deletion patterns .

This is the dark side of automation. Manual processes, for all their slowness and risk of human error, have a natural governor: humans can only act so fast. Automation removes that governor entirely. When automation goes wrong, it goes wrong at machine speed.

By the time engineers noticed the impact and shut down the offending task, 1,100 prefixes had already been withdrawn—25% of all BYOIP prefixes advertised at the time . The damage was done in a window measured in minutes, not hours.

This pattern—a configuration change propagating instantly and catastrophically—had already bitten Cloudflare before. In November 2025, a bot-management update caused widespread degradation. In December 2025, a security tool modification triggered another outage. Both shared the same root cause: the ability to deploy configuration changes globally in seconds, without the safety controls applied to software releases .

Building the Defenses We Actually Need

In the wake of the February 2026 incident, Cloudflare announced a series of measures that amount to a masterclass in defense against automation debt.

1. State Separation: Decoupling Configuration from Execution

The core problem was that changes to the Addressing API propagated instantly to the edge. There was no buffer, no staging ground, no opportunity to catch errors before they became global .

The solution is state separation. Configuration changes shouldn't touch production directly. Instead, the system should periodically take "snapshots" of the configuration database and deploy them like software binaries—through gradual, health-mediated rollouts. If a snapshot causes problems, the system can instantly roll back to a known-good state .

This is the architectural equivalent of an undo button. It doesn't prevent mistakes, but it makes them reversible.

2. Circuit Breakers for Large-Scale Changes

The cleanup task deleted prefixes one by one, systematically dismantling customer configurations. What if the system had been watching for exactly this pattern?

Cloudflare is now building circuit breakers that monitor the rate and scope of changes. If the system detects an abnormally rapid or widespread withdrawal of prefixes—the signature of a runaway automation—it can automatically block further changes until an engineer investigates .

This is the principle of "fail small" made operational. Don't just hope errors won't happen; assume they will, and build mechanisms to contain them.

3. API Schema Validation: Make Contracts Explicit

The ambiguous pending_delete parameter would have been caught by stricter API design. Requiring explicit boolean values—?pending_delete=true or ?pending_delete=false—eliminates the empty-string edge case entirely .

More broadly, APIs should validate all inputs against a strict schema. If a request doesn't match the expected format, it should be rejected outright rather than interpreted in potentially dangerous ways.

4. Treat Configuration Like Code

This is perhaps the most profound shift. Cloudflare's internal "Code Orange: Fail Small" initiative aims to bring the same rigor to configuration changes that the company already applies to software releases .

For software, Cloudflare uses Health-Mediated Deployment (HMD): gradual rollouts with automated health checks and instant rollbacks on failure. For configuration, historically, changes deployed instantly. The new approach extends HMD to configuration, meaning that even internal automation tasks will be subject to the same safety controls as customer-facing software updates .

The Broader Lesson: Automation Debt Is Everyone's Problem

It's easy to read this post-mortem and think, "Well, I'm not Cloudflare. My systems aren't that complex. This couldn't happen to me."

That's exactly the wrong takeaway.

Cloudflare's infrastructure is orders of magnitude more sophisticated than most organizations' setups. Yet the bug that brought them down was astonishingly simple: a single if statement, a missing value, an empty string. This wasn't a failure of complexity; it was a failure of fundamentals.

The same mistake could happen anywhere. In fact, it probably already has—just with smaller blast radii.

The broader lesson is that automation debt accumulates silently but pays out catastrophically. Every script you write without testing the error paths, every API endpoint with ambiguous parameters, every configuration change that deploys without a rollback plan—these are all entries on your security debt ledger.

And the interest? It compounds daily, in the form of risk you don't yet know you're carrying.

A Practical Framework for Your Own Systems

You don't need Cloudflare's scale to learn from Cloudflare's mistakes. Here's a practical checklist for auditing your own automation debt:

Review your automation's error handling. What happens when an API returns unexpected data? When a parameter is missing? When a dependency fails? Your automation should fail gracefully, not catastrophically.

Test the paths you don't expect. We're good at testing happy paths. We're less good at testing what happens when internal services talk to each other in unanticipated ways. Add scenarios to your test suite that simulate automation-to-automation interactions.

Build gradual rollout capabilities. If you can deploy a change globally in seconds, you've also given yourself seconds to catch errors before they become disasters. Implement canary deployments, even for configuration changes. Make rollbacks instantaneous.

Install circuit breakers. Monitor for abnormal patterns. If your system suddenly starts deleting things at 10x the normal rate, that should trigger an automatic halt, not a "let's see what happens."

Treat configuration as code. It should be versioned, reviewed, tested, and deployed with the same care as application code. If you wouldn't push a code change without a pull request and automated tests, don't push a configuration change that way either.

On February 20, 2026, Cloudflare's engineers worked through the night to restore service for hundreds of customers . By 23:03 UTC, the last of the 300 stubborn prefixes was manually repaired. The incident was over.

But the lessons will echo for years.

The most dangerous threats to our systems aren't always the ones coming from outside. Sometimes, they're the ones we write ourselves—the cleanup script with a subtle bug, the automation that assumes too much, the configuration that deploys without a safety net.

We call this "automation debt," but that term is too mild. Debt implies something that can be paid down over time. What we're really talking about is operational fragility—the accumulated risk that someday, somewhere, a single line of code will bring down the house.

Cloudflare's post-mortem is a gift to the industry. It's a detailed, honest accounting of how a world-class engineering team made a mistake, and how they're fixing it. The question is whether the rest of us will learn from it, or wait to make the same mistakes ourselves.

The next time you're reviewing a pull request, approving a configuration change, or designing an automation workflow, ask yourself: "If this goes wrong, how bad could it be? And what's stopping it from going wrong right now?"

The answers might surprise you. And they might just save you from your own 2 AM emergency call.