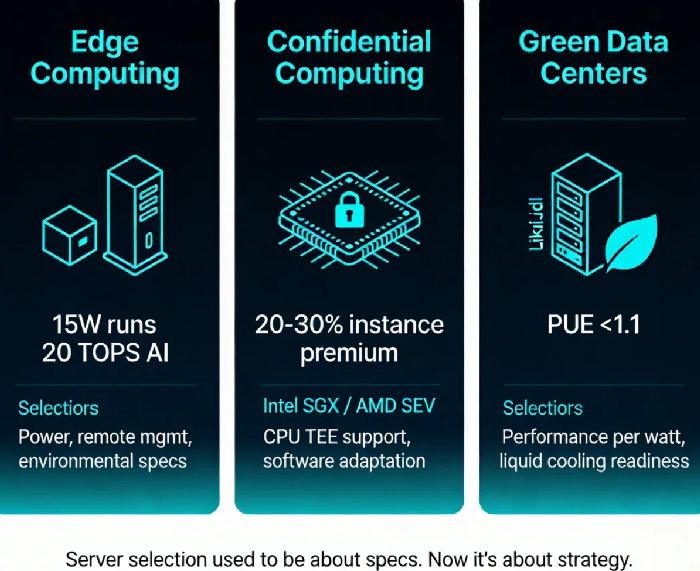

Edge, Confidential, and Green: Three Forces Reshaping Server Infrastructure Decisions

Last month, a friend building a smart retail chain asked me to review his server selection. Three hundred stores, each running real-time AI to detect empty shelves and employee violations. Data couldn't go to the cloud—store networks were unreliable, and video feeds were too sensitive.

I asked the obvious question: "What's your budget per store?"

He said: "Under three thousand RMB."

I blinked. Three thousand yuan for a server running real-time AI inference? Five years ago, that was science fiction.

Then I remembered: a manufacturer just launched an edge AI box. Fifteen watts power consumption. Twenty TOPS of compute. Twenty‑nine hundred ninety‑nine yuan. As I write this, it's already running in a hundred stores.

That conversation made me realize something fundamental: The rules of server selection are quietly being rewritten. If you're still choosing servers based on core counts and RAM size, you're already behind.

01 Edge Computing: Servers Are No Longer "Things in Data Centers"

We grew up with a mental model of servers: rack‑mounted boxes in climate‑controlled rooms, dual power supplies, raised floors. Edge computing is shredding that model.

What does an edge server look like?

It can be a waterproof box bolted to a utility pole, processing traffic lights in real time. It can be a tiny computer tucked inside a gas station kiosk, analyzing transaction fraud. It can be that AI box behind the cash register in my friend's stores, watching for empty shelves.

These "servers" share one trait: They're not in a data center. Nobody babysits them. The environment is hostile. But they must be reliable.

Here's what this means for server selection:

First, power consumption is now a hard constraint. Stores, streetsides, factories—they don't have dedicated server room AC. If a server draws more than 100 watts, cooling becomes a real problem. This is why ARM is suddenly competitive: same performance at half the power of x86.

Second, remote management isn't optional—it's mandatory. Hundreds of edge nodes spread across a city, a province, a country. You can't send someone to fix each one. Servers must support out‑of‑band management (IPMI/Redfish). Firmware must update remotely. The OS must auto‑recover.

Third, environmental specs matter more than raw performance. Industrial temperature ranges (-20°C to 60°C). Dust and water resistance. Vibration tolerance. These specs never appeared on traditional server selection checklists. At the edge, they matter more than CPU clock speed.

Counter‑intuitive truth: Edge computing won't kill the cloud—it will make the cloud more important. Edge handles real‑time data; the cloud aggregates, analyzes, and stores long term. The servers you choose today will likely operate in a cloud‑edge continuum.

02 Confidential Computing: The Missing Piece of Data Security

Here's an uncomfortable question: Your data is encrypted in transit (TLS). It's encrypted at rest (encrypted disks). But what about in memory?

The answer, in most cases: plain text.

What does that mean in practice? If a cloud provider has a rogue insider, if an attacker gains root access, if memory is dumped and analyzed—your data is exposed.

Confidential computing closes this gap.

The core technology is the Trusted Execution Environment (TEE) —a hardware‑enforced, encrypted region inside the CPU. Data processed here is invisible even to the operating system. Intel SGX, AMD SEV, ARM Confidential Computing Architecture—they all do this.

Here's what this means for server selection:

First, your CPU choice must consider hardware trust. Not every processor supports TEE. Not every TEE is useful for your workload. If you handle financial transactions, medical records, or user privacy data, this feature will soon be non‑negotiable.

Second, instance types now have a "confidential" tier. Cloud providers offer "confidential computing instances" at a 20‑30% premium over regular instances. For compliance‑sensitive workloads, that premium is mandatory spending.

Third, the software stack must adapt. Running in a TEE isn't a drop‑in replacement. Code may need modification, recompilation, memory model adjustments. Your selection process must account for this engineering cost.

Counter‑intuitive truth: Confidential computing may be the most overlooked corner of data security. We've spent two decades encrypting data in transit and at rest. Data in use has been a blind spot. Regulators are starting to notice.

03 Green Data Centers: Not Ideology, Arithmetic

Last year, I toured a newly built data center. What struck me wasn't the server count—it was the absence of traditional AC units.

They'd replaced them with immersion cooling: servers dunked in dielectric fluid, heat carried away by liquid, PUE below 1.1. For context, a good air‑cooled data center struggles to hit 1.5.

Why the sudden shift to green data centers? Not because corporations found religion. Because electricity bills got too damn high.

A fully loaded server now costs more in electricity over three years than its initial hardware price. Shave 30‑40% off cooling power, and the savings buy your next generation of servers.

Here's what this means for server selection:

First, efficiency metrics join the scorecard. You used to look at SPECint. Now you look at SPECint per watt. Same performance at 30% lower power? Over five years, that's a whole extra rack of servers for free.

Second, liquid cooling readiness becomes a differentiator. Traditional servers were designed for air. Dropping them into liquid tanks may require retrofits—or fail entirely. New server generations offer liquid‑cooled variants or at least预留接口.

Third, site selection logic is shifting. Data centers used to locate near cities—latency mattered. Future centers will locate near cheap renewables—hydro, wind, solar—because electricity costs matter.

Counter‑intuitive truth: Green data centers aren't a cost—they're a competitive advantage. The earliest adopters of liquid cooling and renewables will win the next decade's cost per compute war.

04 How These Three Forces Affect Your Choices Today

Trend pieces are fun, but they need to land somewhere practical. Let's translate these forces into concrete selection criteria.

If you're building edge projects (stores, factories, roadside, field deployments):

Calculate power budget first, performance second

Consider ARM—better performance per watt

Out‑of‑band management is mandatory; verify remote firmware upgrade paths

Ask environmental questions: temperature range? dust rating? vibration tested?

If you're handling sensitive data (finance, healthcare, user privacy):

Audit whether your data needs "in‑use" encryption

If compliance is trending stricter, start testing confidential instances now

Your software team needs lead time to learn TEE programming models

If you're planning long‑term infrastructure (new data centers, large‑scale procurement):

Add "performance per watt" to your scoring matrix

Ask vendors: liquid cooling ready? what modifications needed?

Model total cost of ownership including electricity—not just purchase price

05 The Bottom Line

My friend with the smart retail chain? He bought that 2,999 yuan edge AI box. Not because it was cheap, but because it draws 15 watts. His stores could plug it into a standard outlet—no electrical work, no AC, no drama.

He said something I haven't forgotten:

"Server selection used to be about specs. Now it's about strategy."

Edge computing, confidential computing, green data centers—these three forces are turning servers from commodity hardware into strategic decisions. Choose right, and you cut costs in half while accelerating your roadmap. Choose wrong, and you're locked into a legacy you'll spend years escaping.

The rules have changed. Will your next server choice reflect the old game or the new one?