In 2016, Netflix's recommendation team was about to launch a new model. Three weeks of testing in staging. Everything looked perfect. Then they ran their standard "chaos experiment"—they deliberately cut the database connection to one of the services the recommendation system depended on.

What happened next surprised everyone: the entire recommendation system collapsed within 30 seconds. Homepage failed to load. Users saw blank screens.

The root cause? The code had retry logic—but no backoff. When the database died, every request retried simultaneously, overwhelming another service. Those retries triggered more retries, and soon nothing could recover.

If that bug had been triggered by real users in production, the damage would have been catastrophic. But Netflix never found out. Because they had already "crashed" their own system—in a controlled environment, before users ever noticed.

That's chaos engineering: Injecting failures proactively. Exploding your weakest points on your own schedule, before your users do it for you.

01 What Chaos Engineering Is Not

Before we talk about how, let's clear up some misconceptions.

Chaos engineering is not random destruction. Some people hear "inject failures" and imagine someone running around flipping switches. No. Chaos engineering is deliberate experimentation. Every step is designed: hypothesis, injection, observation, validation. It's not throwing grenades. It's defusing bombs.

Chaos engineering is not just for staging. You can start in staging, of course. But real confidence comes from production. Production traffic, production data, production dependencies—no staging environment can replicate those. Of course, you need guardrails: minimal blast radius, real-time monitoring, automatic rollback.

Chaos engineering is not about proving your system will fail. It's about proving it won't. The goal isn't destruction—it's validation. Validating that your high-availability architecture actually works. That your failover mechanisms actually fail over. That your alerts actually fire.

02 The Vaccine Analogy

Chaos engineering works exactly like a vaccine.

A vaccine injects a small amount of weakened virus into your body. Your immune system learns to recognize it. When the real virus shows up, your body knows what to do.

Chaos engineering injects small, controlled failures into your system. Your system triggers circuit breakers, rate limiting, graceful degradation, retries. When real failures happen, your system knows what to do.

A system that has never been through chaos engineering is like an unvaccinated person. The first time it meets a real failure, it probably won't survive. Because those circuit breakers may have never actually opened. Those fallback paths may have never actually been walked. Those backup nodes may have never actually taken traffic.

Counter-intuitive truth: Your system is most vulnerable not under peak load, but right after launch. Because every protection mechanism is "theoretically" working. None have been tested in reality.

03 How to Design a Chaos Experiment

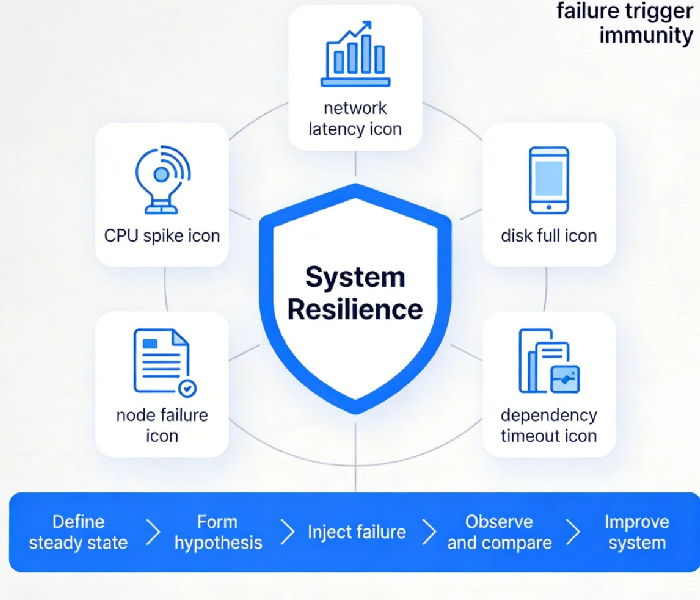

Chaos experiments aren't just running random failure scripts. They follow a structure.

Step 1: Define steady state

What does "normal" look like? Metrics—latency, error rate, throughput. Establish a baseline. This is your control group.

Step 2: Form a hypothesis

"If we kill one order service pod, users can still place orders." Write it down. Make it testable.

Step 3: Inject failure

Choose a failure type. Start small. Common failure types:

Infrastructure: CPU spike, memory exhaustion, disk full

Network: latency, packet loss, connection refusal

Application: exceptions, timeouts, process death

Dependencies: database disconnection, downstream service outage

Step 4: Observe and compare

After injection, watch your system. Did steady state metrics change? Did users notice? Did auto-recovery mechanisms kick in?

Step 5: Improve

If your system survived—great, hypothesis confirmed. If it didn't—you just found a blind spot. Fix it. Then run another experiment.

04 Start Small: The Minimal Blast Radius

Most people hear "chaos engineering" and panic: "You want me to break production? My boss will kill me."

Fair. Start small.

First, pick a non-critical service. Internal tools, edge services. If they break, no one notices. Practice here.

Second, pick a "safe" failure type. Add 50ms of network latency. The service won't die, but it might trigger timeout and retry logic. See if your alerts actually fire.

Third, run experiments manually, not automated. Automation comes later. Run manually first, learn your system's behavior, then codify.

Fourth, expand gradually. From edge services to core services. From off-peak to peak. From single failures to compound failures. Every expansion needs a rollback plan.

The minimal blast radius principle: Keep affected users under 1% per experiment. If something goes wrong, you can tolerate 1% impact.

05 Tooling Landscape

Chaos engineering tools have matured. Choose what fits.

Open source:

Chaos Mesh: Cloud-native, great for Kubernetes. Pod-level injection, active community.

ChaosBlade: Alibaba's open-source tool. Supports multiple environments (host, container, K8s). Rich failure types.

Litmus: Kubernetes-native, designed for chaos. Good documentation.

Commercial:

Gremlin: The original. Great UI, safety features, free tier available.

AWS FIS: Amazon's native service. Deep AWS integration.

Azure Chaos Studio: Microsoft's offering. Still preview but promising.

Startups: begin with Chaos Mesh or ChaosBlade. Free, capable. As your team matures, evaluate commercial options.

06 A Real Story

Last year, before Singles' Day, I helped an e-commerce client run chaos drills. Their core system was "highly available": multi-AZ deployment, master-replica databases, Redis cluster. In theory, losing any single node shouldn't affect users.

We designed a simple experiment: two hours before peak traffic, kill one Redis node.

What happened next surprised them.

The Redis cluster triggered a rebalance. During rebalance, all requests bypassed cache and hit the database. The database couldn't handle the load. Queries slowed down. Connection pools filled up. The entire chain collapsed.

The root cause? Their Redis client was configured to rebuild connection pools when nodes changed. During rebuild, every request went to the database. They had tested single-node failures. They had never tested a node being forcefully removed.

This was a drill, not a real incident. They had four hours to fix: reconfigure the client, raise connection pool limits, tune degradation policies. On Singles' Day, that bug never appeared.

Their CTO later said: "I used to think building high availability was enough. Now I know—high availability isn't built. It's practiced."

07 When Should You Start Chaos Engineering?

Someone inevitably asks: "We're still a startup. Isn't chaos engineering overkill?"

My answer: The same day you start caring about reliability, you can start chaos engineering.

Disaster recovery isn't insurance you buy and forget. It needs validation. You have master-replica? Test if failover actually loses data. You have multi-AZ? Test if traffic actually shifts. You have rate limiting? Test if it actually triggers.

That validation? That's chaos engineering.

You don't need a mature system to start. The earlier you start, the cheaper fixes are. Blind spots found early cost hours. Blind spots found later cost weeks.

The Bottom Line

When Netflix started chaos engineering in 2011, people thought they were crazy. "You break your own systems on purpose?"

Today, chaos engineering is standard practice at every major cloud company. AWS, Google, Microsoft, Alibaba—all of them. Not because they're reckless. Because they've learned: Better we find our weaknesses than our users do.

Has your system had its "vaccine"?

Those circuit breakers you added—have they ever actually opened? Those fallback paths—have they ever actually been walked? Those backup nodes—have they ever actually taken traffic? Those alerts—have they ever actually fired?

If you can't answer these questions, try this tonight: shut down one non-critical instance. See what happens.