Cloud Data Warehouse Selection: Redshift, BigQuery, or Self-Managed?

Last month, a friend running an e-commerce company asked me: "Our user data is exploding. MySQL can't handle the analytics queries anymore. We need a data warehouse. AWS has Redshift, Google has BigQuery, Alibaba has something. Which one should we pick? Or should we just roll our own Hadoop cluster?"

I asked: "How much data are we talking?"

"A few tens of terabytes. We've been collecting for two years, tens of millions of rows per day."

"Analytics workload?"

"BI dashboards, user behavior analysis, operations reports. Occasionally some complex ad‑hoc SQL."

"Your team?"

"Two backend engineers, one part‑time data analyst. No one dedicated to big data."

I said: "For your situation, do not build it yourself."

This is the dilemma every data‑driven company eventually faces: your transactional database can't keep up with analytics. It's time for a real data warehouse. But the options are overwhelming. Redshift, BigQuery, Snowflake, self‑managed Hadoop… How do you choose?

Today, let's talk about cloud data warehouse selection. Not the usual "it depends" fluff, but a real framework: What fits your data volume, your team's skills, and your query patterns?

01 First, Understand: A Data Warehouse Is Not Just a Bigger Database

Many people think a data warehouse is just turning up the dials on MySQL. That's the first trap.

OLTP databases (MySQL, PostgreSQL) are designed for transactions—high concurrency, small queries, strong consistency. Run a few heavy analytics queries, and they'll drag down your online business.

Data warehouses are designed for analytics—low concurrency, massive queries, scanning billions of rows. They can return a query that scans terabytes in seconds—something OLTP databases can't do.

So the first decision point: If your analytics queries are starting to impact production, or if running a report takes half an hour, it's time for a data warehouse.

02 The Big Three, Briefly

AWS Redshift

Redshift is the veteran, launched in 2013. It's a columnar database built on PostgreSQL, using MPP (massively parallel processing) to distribute data across nodes.

Strengths: Deep integration with the AWS ecosystem—ingest from S3, RDS, Kinesis seamlessly. Reserved instance pricing makes steady workloads predictable.

Weaknesses: Not truly compute‑storage separated. You pick node types and count; scaling isn't instant. Configuration isn't trivial.

Google BigQuery

BigQuery is the cloud‑native pioneer, launched in 2010 (even earlier than Redshift). It's fully serverless—storage and compute are separated, billed independently. Compute spins up only when you query.

Strengths: Zero ops—no node selection, no tuning. Pay‑per‑query, perfect for variable workloads. Ridiculously fast at scanning massive tables.

Weaknesses: Tied tightly to the Google ecosystem. Cross‑cloud data sources incur network egress costs. Sustained high load can be more expensive than reserved instances.

Self‑Managed (Hadoop/Spark)

Roll your own data lake or warehouse with tools like EMR, Hive, Spark SQL, Presto.

Strengths: Total control. Mix and match open‑source components. No vendor lock‑in.

Weaknesses: Ops overhead is massive—tuning, monitoring, scaling, troubleshooting. You need a team that knows the big‑data stack.

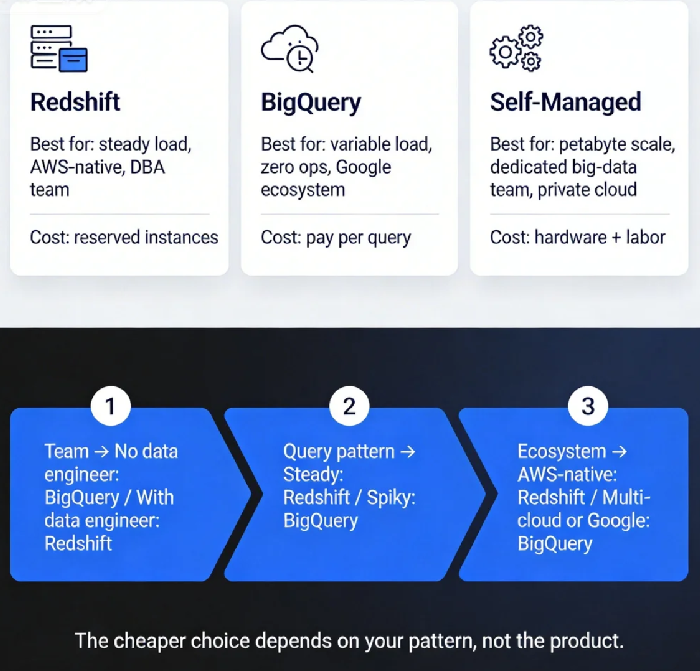

03 Counter‑Intuitive: Redshift Isn't Always Cheaper Than BigQuery

There's a common belief: Redshift is the "traditional" option, so it's cheaper. BigQuery is the "cloud‑native" option, so it's more expensive.

That's true in some scenarios. Flip the scenario, and it reverses.

Scenario 1: Steady load, continuous queries

Say you run the same dashboards every day, 24/7. With Redshift, you buy reserved instances. Fixed monthly cost. Let's say 10 nodes run you $2,000–3,000/month.

With BigQuery, pay‑per‑query under the same load might hit $4,000–5,000/month. Here, Redshift wins.

Scenario 2: Spiky load, ad‑hoc analysis

Your data scientists run exploratory queries during the day, but nights are quiet. With BigQuery, you pay only for queries executed. No queries, no cost.

With Redshift, your cluster is still running overnight, costing the same. Here, BigQuery wins.

Real data point: A SaaS company I worked with moved from Redshift to BigQuery. Their monthly bill dropped from $8,000 to $3,000. Their query pattern was "busy daytime, near‑zero at night"—perfect for BigQuery's pay‑per‑query model.

Counter‑intuitive conclusion: Which is cheaper depends on your query pattern, not the product itself.

04 What About Self‑Managed? Does It Really Save Money?

Many companies eye open‑source stacks thinking "free software = cheaper." Let's do the math.

Infrastructure: An EMR cluster costs about the same as Redshift nodes of similar size.

People: You need someone to build the cluster, tune it, fix failures, upgrade versions. A big‑data engineer costs at least $80–120K/year. Even half‑time, that's $40–60K/year.

Time: Getting a production‑ready Hive warehouse? At least a week for a basic setup. A month to make it reliable. And that's assuming your team already knows the stack.

A friend's company once insisted on self‑hosting Hadoop because "open source is free." Three months later, they'd spent a fortune on engineering time, queries were painfully slow, and they eventually migrated to Redshift. The self‑hosting experiment cost more than three years of Redshift.

When does self‑managed make sense?

Data volume is truly massive (petabytes) where cloud vendor costs become prohibitive.

You already have a seasoned big‑data team in‑house.

Data sovereignty forces private or hybrid deployment.

You have a hard dependency on specific open‑source components.

Otherwise, cloud data warehouses are simply less painful.

05 A Decision Framework: Three Questions

Question 1: What's your team's capacity?

No dedicated data engineer → BigQuery (or Snowflake) for zero‑ops.

Have DBAs or data engineers → Redshift is viable, but someone will need to tune it.

Question 2: What's your query pattern?

Steady, predictable load → Redshift reserved instances likely cheaper.

Spiky, ad‑hoc, variable → BigQuery's pay‑per‑query wins.

Mixed → Consider Redshift Spectrum (separate storage/compute) or Snowflake.

Question 3: What's your cloud ecosystem?

All‑in on AWS (S3, RDS, Kinesis) → Redshift integrates most smoothly.

Multi‑cloud or Google‑centric (GA, GCS) → BigQuery is the natural fit.

Already on Snowflake or need multi‑cloud → Snowflake is purpose‑built for this.

06 A Real Story: From Self‑Managed Hive to BigQuery

Last year, I helped a gaming company. They stored billions of user‑event rows daily in HDFS and ran analytics with Hive. Over time, Hive slowed down, operations got heavier. They went from one big‑data engineer to three, and they were still struggling.

We evaluated and recommended BigQuery.

Migration: They streamed data from HDFS to GCS using Dataflow, then loaded it into BigQuery. Query times dropped from 20 seconds on average to 3 seconds. Operations overhead went to nearly zero. The team of three was reduced to one (the others moved to data modeling and business analytics).

Cost? Their self‑managed Hadoop cluster ran $20,000/month (including instances and labor). BigQuery now costs $12,000/month. A 40% reduction—plus the team now focuses on value, not infrastructure.

The Bottom Line

Back to my friend with the e‑commerce company. His data volume: tens of terabytes. Team: no dedicated big‑data engineer. Query pattern: operational dashboards and ad‑hoc analysis.

I told him: "Start with BigQuery."

Why? Because his biggest risk was building something they couldn't maintain. Redshift needs node selection, tuning, monitoring. Self‑managed was a non‑starter. BigQuery is zero‑ops, pay‑per‑query, low commitment. Perfect for his stage.

Three months later, he messaged me: "Our BI dashboards went from 5 minutes to 5 seconds. Operations thinks we replaced the whole system."

The worst mistake in data warehouse selection isn't picking the "wrong" one. It's not picking any—waiting until your analytics are so broken that you're firefighting every report. Data doesn't wait. Neither should you.

Where is your data living right now? And who's going to manage it tomorrow?