Cloud Circuit Breaking and Fallback in Practice: Don’t Let a Small Failure Cascade

Last Black Friday, an e‑commerce client’s core system collapsed. The root cause? A non‑critical service—product recommendations—started slowing down. Response times crept from 50ms to 3 seconds, then 5 seconds, then timed out. The recommendations service didn’t die, but the order service that called it couldn’t wait. The order service’s thread pool filled up. New requests queued. Soon, the services upstream of orders began timing out. The entire checkout chain avalanched. Users couldn’t place orders.

One slow, non‑critical service took down the whole site.

This is the classic tragedy of microservice architectures: a small failure, amplified through the call chain, brings everything down.

Today, let’s talk about circuit breaking and graceful degradation. Not the “circuit breakers are important” fluff, but a practical guide: how cascading failures happen, how to configure a circuit breaker, how to design fallbacks, and how to make sure a small failure kills only itself—not its neighbors.

01 How a Cascading Failure Happens

A cascading failure doesn’t happen instantly. It develops in three stages.

Stage 1: Timeout accumulation

A downstream service slows down. Upstream callers wait. Each request holds onto a thread for longer than usual. Threads don’t release.

Stage 2: Resource exhaustion

The thread pool fills up. New requests can’t enter. They queue or get rejected. The upstream service’s own response time begins to increase, affecting services further up the chain.

Stage 3: Chain reaction

The upstream’s upstream also starts timing out, queuing, rejecting. The failure propagates all the way to the entry point. The whole link becomes paralyzed.

Counter‑intuitive truth: The root cause of a cascade is rarely a service that’s completely dead. It’s a service that’s just slow enough to tie up resources without failing fast. Complete failure is easy to handle. Slow death is the real poison.

02 Timeouts: The Prerequisite for Circuit Breaking

Many people set timeouts arbitrarily: 3 seconds, 5 seconds, “that should be enough.” But if timeouts are wrong, circuit breakers are useless.

How to set timeouts:

First, understand the downstream service’s normal response time. What’s the P99?

Set the timeout to 2‑3× the P99. Too short, and you’ll kill healthy requests. Too long, and during a fault, threads are held for too long.

Separate settings for different scenarios: read‑heavy endpoints can have shorter timeouts; write‑heavy endpoints need longer.

Real example: A company called a recommendations service with a normal P99 of 200ms. Their timeout was set to 10 seconds. When the recommendations service slowed to 5 seconds, it still fell within the timeout. Callers waited 5 seconds each. Thread pools filled up quickly. Cascade followed. They changed the timeout to 500ms. When the problem recurred, calls failed fast, thread pools stayed healthy, and the system survived.

Remember: No timeout means no circuit breaker. Too long a timeout means the circuit breaker is worthless.

03 Circuit Breaker: Automatically Cut the Faulty Link

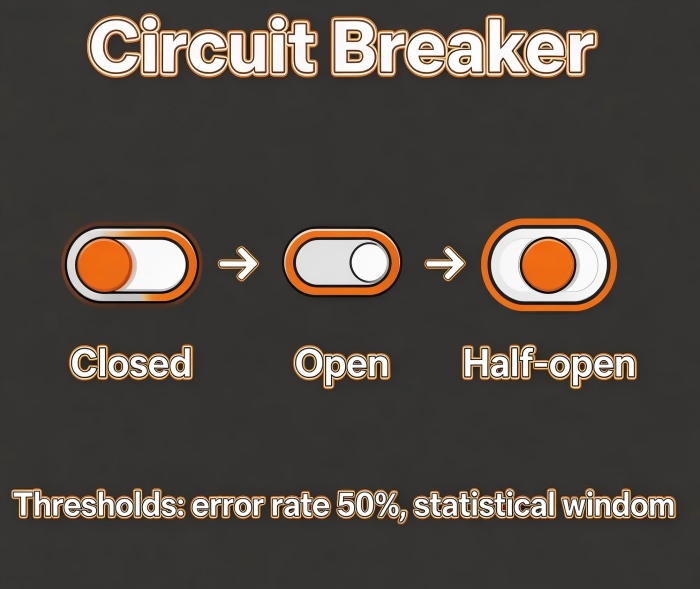

A circuit breaker has three states, like an electrical switch.

Closed – Normal operation. Requests pass through. The breaker counts errors or slow calls.

Open – The error rate exceeds the threshold. The breaker trips. Subsequent requests fail immediately without calling the downstream service.

Half‑open – After a waiting period, a limited number of test requests are allowed through. If they succeed, the breaker closes. If they fail, it stays open.

How to set thresholds:

Error rate threshold: Typically 50%. Higher means more failures before tripping (wider impact). Lower means more sensitive (risk of false trips).

Slow call threshold: Typically 2‑3× the P99.

Statistical window: How many seconds of data to evaluate (e.g., 10s, 20s, 60s). Too short leads to jitter; too long delays reaction.

Sleep window: How long the breaker stays open before transitioning to half‑open. Usually 5‑30 seconds. Too short, and the downstream may not have recovered; too long, and you waste recovery time.

Tooling options:

Hystrix (Netflix, now in maintenance mode): feature‑rich, mature.

Resilience4j: lightweight, designed for Java 8+, currently the mainstream choice.

Sentinel (Alibaba open‑source): powerful, dynamic rules, good for large scale.

Service mesh (Istio, Linkerd): circuit breaking at the infrastructure layer, transparent to applications.

04 Fallback: Give the User a Respectable Answer

Circuit breaking stops the call downstream. Fallback decides what happens after the circuit trips.

The core principle: Don’t show the user a blank screen or a raw error code. Give them an acceptable result.

Common fallback strategies:

Return cached data: Recommendations service fails? Return the last cached recommendations. Not fresh, but better than nothing.

Return a default value: Inventory service fails? Show “in stock” (optimistic) or “temporarily unavailable, please try again later.”

Disable non‑core features: Turn off “customers also bought” or “recently viewed.” Keep the checkout flow alive.

Page degradation: Remove dynamic modules; show static content.

Fallback levels:

Level 1: Disable the least important features. Users barely notice.

Level 2: Disable secondary features. Users notice something missing, but core functions work.

Level 3: Keep only the core transaction path. The page looks sparse, but users can still place orders.

That e‑commerce client changed their fallback: when the recommendations service failed, the order page didn’t show “customers also bought.” It showed a static banner instead. Users barely noticed the change, and orders continued to flow.

05 Rate Limiting: Don’t Let Traffic Overwhelm You

Circuit breaking and fallback handle downstream failures. Rate limiting handles upstream overload.

Common rate‑limiting algorithms:

Token bucket: Tokens are added at a fixed rate. Requests consume tokens. No token? Wait or reject. Allows bursts.

Leaky bucket: Requests enter a bucket and drain at a fixed rate. Smooths out spikes; good for protecting downstream.

Sliding window: Counts requests in the last N seconds. More accurate than fixed window.

How to set rate limits:

Load‑test to find the QPS capacity of a single instance. Set the limit at 70‑80% of that capacity for a safety margin.

Different limits for different endpoints: read endpoints can have higher limits; write endpoints lower.

What to do when rate‑limited? Queue the request, reject immediately (HTTP 429), or fall back to a default response.

Counter‑intuitive truth: Rate limiting isn’t about preventing you from exceeding a number. It’s about making sure you fail gracefully when you do. Better to reject some requests than to let all of them time out.

06 A Real Story: From Cascade to Rock‑Solid

Back to the e‑commerce client. After the Black Friday incident, they made several changes:

First, added timeouts to every downstream call, set at 2‑3× the P99. The recommendations call went from a 10‑second timeout to 500ms.

Second, introduced a circuit breaker. If the recommendations service’s error rate exceeded 50%, the breaker tripped. After 10 seconds, it tried a half‑open test.

Third, implemented fallback logic. When the circuit was open, the order page didn’t call recommendations. It showed a static banner instead. Users barely noticed.

Fourth, added rate limiting at the API gateway. Total QPS was capped. Requests above the limit received a “system busy, please try again later” response.

The next big sale, the recommendations service had another problem. This time, the circuit breaker tripped within seconds. The order service used the fallback immediately. The whole system stayed stable. Their ops lead said: “Before, one small failure could kill the entire site. Now it kills only itself. No collateral damage.”

The Bottom Line

Microservice architectures give you flexibility. They also give you fragility. One service can bring down many others.

Timeouts, circuit breakers, fallbacks, and rate limiting aren’t “nice to have.” They must be configured correctly. Too long a timeout is as bad as no timeout. Too sensitive a threshold causes false trips. A crude fallback frustrates users.

That ops lead summed it up: “I used to think stability meant nothing ever fails. Now I think resilience means failures happen, but only the broken part breaks. The rest keeps running.”

How resilient is your system today?