Database Overloaded? A Practical Guide to Read‑Write Splitting and Sharding

Last year, an ed‑tech client told me their database was dying. Every evening, CPU hit 90%, response times jumped from 50ms to 3 seconds. Users complained. The CEO kept asking for answers.

“What are you doing about it?” I asked.

“Adding more CPU and memory,” they said. “But we’re already at the top‑end RDS instance. The next tier costs a fortune, and it only buys us a few more months.”

This is the classic growth trap. Your business is growing, but your database can’t keep up. Vertical scaling (bigger instances) has hit diminishing returns. Horizontal scaling (more machines) feels like black magic.

Today, let’s talk about the two main ways to scale a database: read‑write splitting and sharding. Not the theoretical “it’s important” fluff, but a practical guide: when to use each, how to implement them, and how to avoid the common traps.

01 First, Diagnose: Is Your Bottleneck Reads or Writes?

The first step isn’t picking a technology. It’s understanding your workload.

Look at your metrics:

In your slow query log, are SELECT statements the problem, or INSERT/UPDATE/DELETE?

When CPU is high, is the database processing reads or writes?

What’s the read/write ratio of your IOPS?

Here’s the key insight: about 80% of database bottlenecks are read bottlenecks, not write bottlenecks. Most businesses are read‑heavy: browsing products, reading articles, checking orders. Writes are comparatively rare.

That ed‑tech client was a classic read‑heavy case. Users watched courses in the evening. Read queries flooded the database. The primary CPU was pegged—mostly on reads. Write traffic was low.

Counter‑intuitive truth: When your database is struggling, your first move shouldn’t be sharding. It should be read‑write splitting. Splitting solves 80% of bottlenecks and is far simpler.

02 Read‑Write Splitting: The Cure for Read‑Heavy Workloads

The idea is simple: the primary handles writes; replicas handle reads.

Primary: INSERT, UPDATE, DELETE. The source of truth.

Replicas: Asynchronously replicate from the primary. Handle SELECT queries.

How to implement in the cloud?

RDS, Aurora, and Cloud SQL all support adding read replicas with a few clicks. Create replicas, point your read traffic to them, and the primary’s load drops immediately.

How to route traffic?

Application layer: Configure multiple data sources. Use annotations or rules to decide primary vs replica.

Middleware layer: ShardingSphere, ProxySQL, or Vitess. Transparent to your application.

Cloud native: RDS read‑replica endpoints that automatically distribute read queries.

The big trap: replication lag.

Replicas are eventually consistent. Lag is usually sub‑second, but during heavy write loads it can reach seconds or minutes. If you write data and immediately read it from a replica, you may see stale data.

Solutions:

Critical reads go to the primary (e.g., check order details immediately after placing an order).

Business tolerance: seeing a new course in the list a few seconds late is fine.

Monitor lag and alert when it exceeds a threshold.

That ed‑tech client added two read replicas and moved read traffic off the primary. CPU on the primary dropped from 90% to 30%. That bought them another year of growth before they needed the next step.

03 When Read‑Write Splitting Isn’t Enough

Eventually, writes become the bottleneck. Or your data volume grows so large that even replicas are slow. That’s when you need sharding.

Sharding comes in two flavors:

Vertical sharding (split by business domain): Separate tables into different databases: orders, users, products. Simple, but cross‑domain joins become impossible.

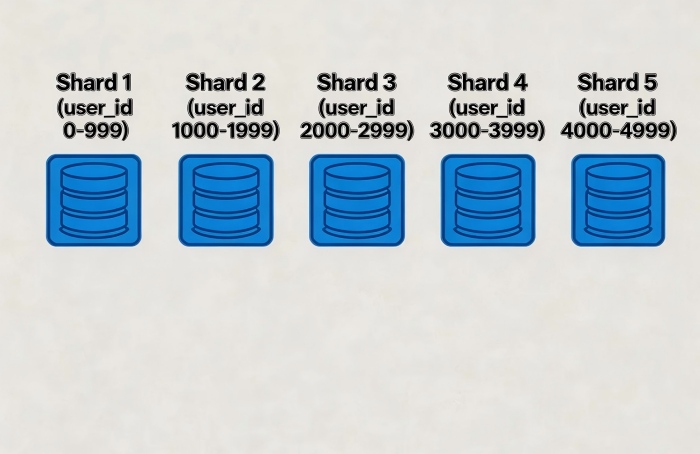

Horizontal sharding (split a single table): Distribute rows of the same table across multiple databases based on a shard key (e.g., user_id). Complex, but scales linearly.

When do you need horizontal sharding?

A single table exceeds 5 million rows and queries are slowing.

A single table exceeds 10 million rows and indexes become less effective.

Write TPS on the primary has hit its limit, and adding replicas doesn’t help (because writes must go to the primary).

The hardest problem: choosing a shard key.

The shard key determines how data is distributed and how queries are routed.

Shard by user_id: All of a user’s orders, cart, and profile are in the same shard. Great for user‑centric queries.

Shard by order_id: Good for order‑centric queries, but user‑centric queries become cross‑shard.

Shard by time: Good for logs or time‑series data. Easy to archive.

Golden rule: 80% of your queries should include the shard key. If most queries need to scan multiple shards, you’ve chosen the wrong key.

04 The Cost of Sharding: Are You Ready?

Read‑write splitting is simple—add replicas, route traffic, done. Sharding is major surgery. Once you shard, you can’t easily go back.

Cost 1: Cross‑shard queries

A simple SELECT that used to run on one node now needs to query multiple shards and aggregate results. Pagination, sorting, and COUNT(*) become complex.

Cost 2: Distributed transactions

ACID transactions that spanned a single database now span multiple nodes. Distributed transaction protocols (XA, TCC) are slow or complex. In practice, many teams relax to eventual consistency and accept the trade‑offs.

Cost 3: Data rebalancing

When you need to add more shards (e.g., from 8 to 16), you have to redistribute data. This may require downtime or complex dual‑write migration.

Cost 4: Global unique IDs

Auto‑increment primary keys no longer work. You need a global ID generator (Snowflake, Leaf) that works across shards.

Counter‑intuitive truth: Sharding isn’t optimization—it’s a compromise. You trade operational complexity for capacity. If you can avoid it, avoid it.

05 A Smooth Evolutionary Path

Don’t start with sharding. Follow this progression:

Stage 1: Single database + read replicas

Add read replicas. Move read traffic off the primary. Most companies stop here and stay here for years.

Stage 2: Vertical sharding

Split by business domain. Orders, users, products—each in its own database. This is relatively easy and doesn’t change core business logic dramatically.

Stage 3: Horizontal sharding

Split the largest, most write‑intensive tables horizontally. Choose a shard key carefully.

Stage 4: Cloud‑native distributed database

If horizontal sharding becomes too painful, consider a cloud‑native distributed database like Aurora, TiDB, CockroachDB, or Spanner. They handle sharding, distributed transactions, and elastic scaling for you.

That ed‑tech client? They’ve been at Stage 1 for two years. They’ve added replicas, tuned queries, and recently did some vertical sharding (courses database separated from users database). They haven’t needed horizontal sharding yet. Their tech lead told me: “I almost jumped straight to sharding. Glad I didn’t.”

06 A Real Story: From One Database to Sharded

An e‑commerce client’s orders table grew to 200 million rows over three years. Queries slowed. Writes struggled. We followed a gradual path:

Step 1: Read‑write splitting. Added two read replicas. Bought six months.

Step 2: Vertical sharding. Split the order header table from the order line‑item table (line items were the real problem). Bought another six months.

Step 3: Horizontal sharding. Sharded the order line‑item table by user_id (hash) into 8 databases. The order header table followed the same shard key. A user’s orders and line items stayed in one shard.

Migration: dual‑writes to old and new, backfilled historical data, then cut over. Two weeks, minimal downtime.

Today, that table has 500 million rows spread across 8 shards, each about 60‑70 million rows. Queries are fast. They plan to expand to 16 shards next year.

Their ops lead said: “The path was longer, but we never had a ‘big bang’ outage. Each step was manageable.”

The Bottom Line

When your database starts creaking, don’t panic. Diagnose first: reads or writes? Most likely, it’s reads. Add read replicas. That alone will buy you months or years.

When that’s not enough, consider vertical sharding—split by business domain. Only when writes are the bottleneck or a single table is enormous should you consider horizontal sharding.

Sharding is a last resort, not a first choice. Avoid it if you can, delay it if you must. The complexity is real, and the costs add up.

That e‑commerce ops lead summed it up: “Database scaling is like widening a road. First, add lanes (read replicas). When that’s full, build an overpass (sharding). But don’t build the overpass before the road is even crowded.”

Where is your database on that road?